Alphabet’s Share Price Plummets As Google BARD Give the Wrong Answer

Alphabet, the parent company of Google, saw its shares drop by 8% following the launch of its new artificial intelligence (AI) search assistant, Bard. This marked the largest one-day fall in Alphabet’s value since October 2022.

Wrong Answer Generated by Bard Raises Concerns About Accuracy of AI-Generated Responses

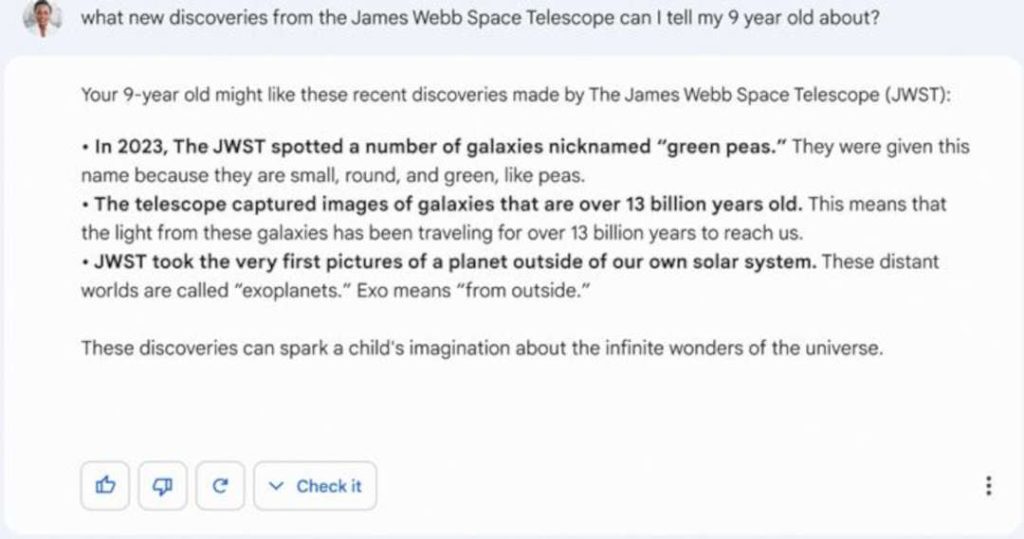

A wrong answer generated by Bard in promotional material caused the dip in Alphabet’s value. In an example of Bard’s functionality, a response was generated that contained false information about the James Webb Space Telescope (JWST). The answer stated that the JWST took the first ever picture of a planet outside of our solar system, when in reality, this achievement was accomplished by the Very Large Telescope array in Chile in 2004. This error has raised concerns about the accuracy of AI-generated answers and search engines.

Google Bard: AI-Powered Text Summaries

Google Bard was introduced as a tool to generate text summaries of search results. The AI system analyzes search queries and provides brief answers and summaries to users. The system’s primary aim is to make search results more accessible and easily understood by users.

OpenAI Admits Limitations of AI Technology

OpenAI, the creators of the language model ChatGPT, have acknowledged that AI technology, including their own, can sometimes provide incorrect or nonsensical answers. This highlights the importance of verifying information, even when it is generated by an AI system.

Transparency About Bard’s Training

Google has yet to disclose how Bard was trained to generate its answers and search result summaries. This lack of transparency raises questions about the underlying algorithms and processes used by the AI system.

Doubt Thrown on All AI Generated Results

The launch of Google Bard and the subsequent fall in Alphabet’s share price raise important questions about the accuracy and transparency of AI-generated responses. While AI technology has the potential to improve search results and make information more accessible, it is crucial to understand its limitations and ensure that the information provided is correct. This can be achieved through greater transparency about the training and algorithms used by AI systems, and by verifying information before accepting it as truth.

Staff writer. Jonas has an extensive background in AI, Jonas covers cloud computing, big data, and distributed computing. He is also interested in the intersection of these areas with security and privacy. As an ardent gamer reporting on the latest cross platform innovations and releases comes as second nature.